In early June, my PhD student Sam Coward (co-advised by Theo Drane from Intel), will travel to ARITH 2024 in Málaga to present some of our most recent work, “Combining Power and Arithmetic Optimization via Datapath Rewriting”, a joint paper with Emiliano Morini, also of Intel. In this blog post, I will describe the fundamental idea of our work.

It’s well-known that ICT is driving a significant amount of energy consumption in the modern world. The core question of how to organise the fundamental arithmetic operations in a computer in order to reduce power (energy per unit time) has been studied for a long time, and continues to be a priority for designers across industry, including the group at Intel with whom this work has been conducted.

Readers of this blog will know that Sam has been doing great work on how to explore the space of behaviourally equivalent hardware designs automatically. First for area, then for performance, and now for power consumption!

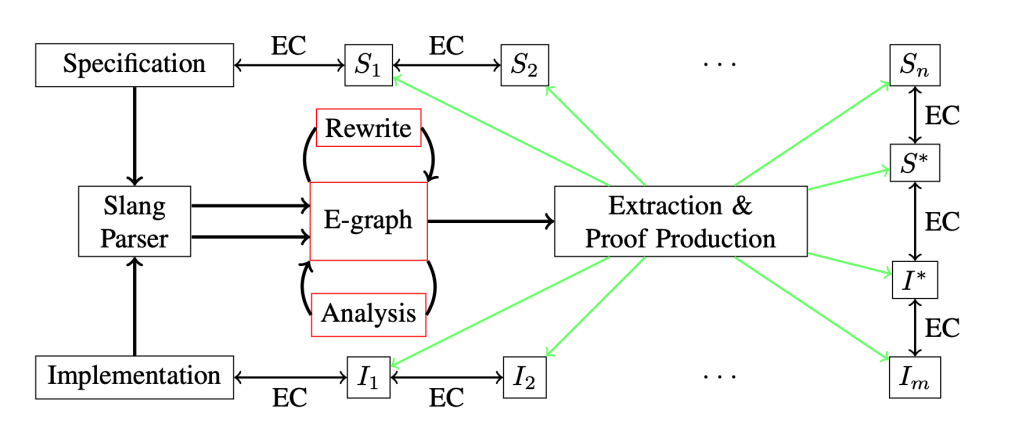

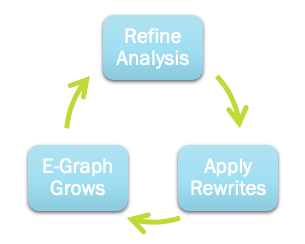

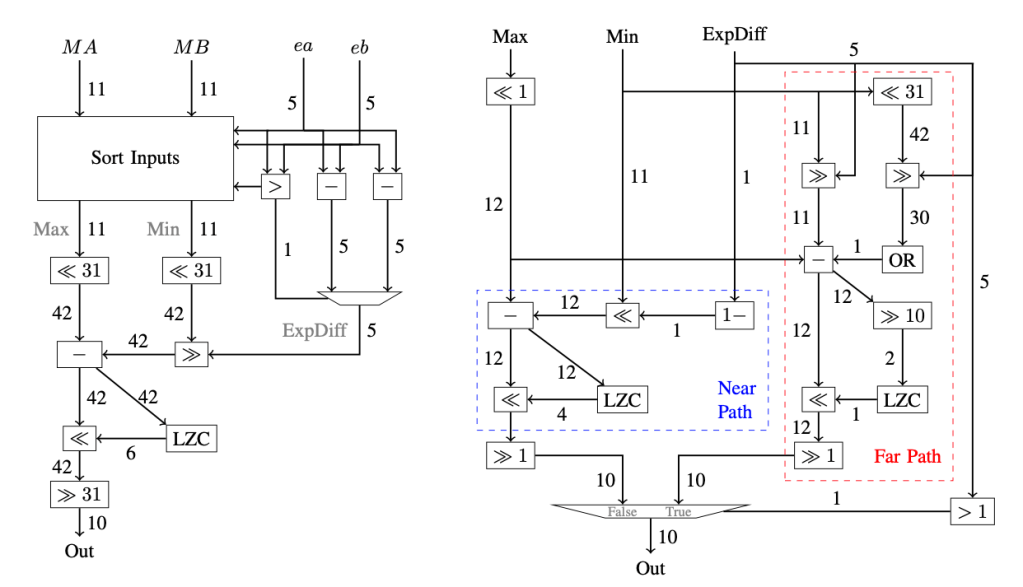

In our latest work, Sam looks at how we can use the e-graph data structure, and the related egg tool, to tightly integrate arithmetic optimisations (like building multi-input adders in hardware) with clock gating and data gating, two techniques for power saving. Clock gating avoids clocking new values into registers in hardware if we know they’re not going to be used in a given cycle; this avoids the costly switching activity associated with propagating unused information in a digital circuit. Data gating also avoids switching, but in a different way – by replacing operands with values inducing low switching: for example, if I do not end up using a result of , then I may as well be computing

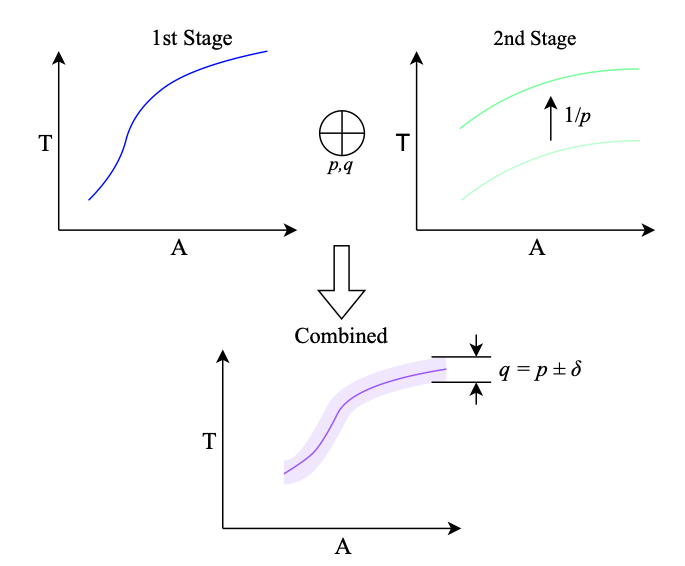

. In both cases, the fundamental issue becomes how to identify whether a value will be unused later in a computation. Intriguingly, this question is tightly related to the way a computation is performed: there are many ways of computing a given mathematical computation, and each one will have its own redundancies to exploit.

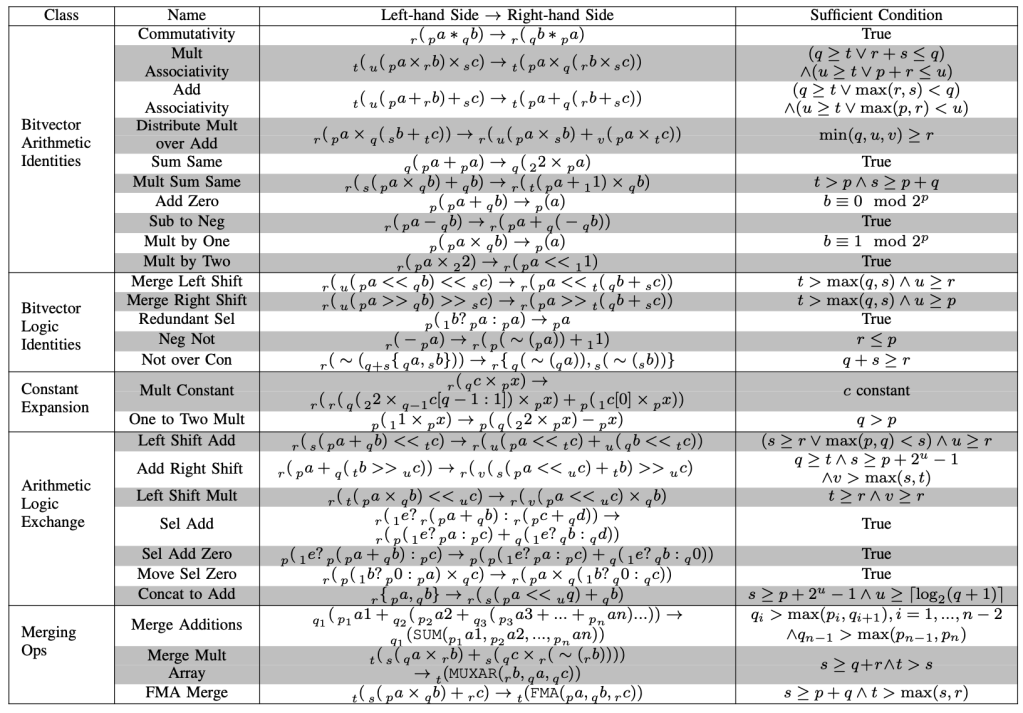

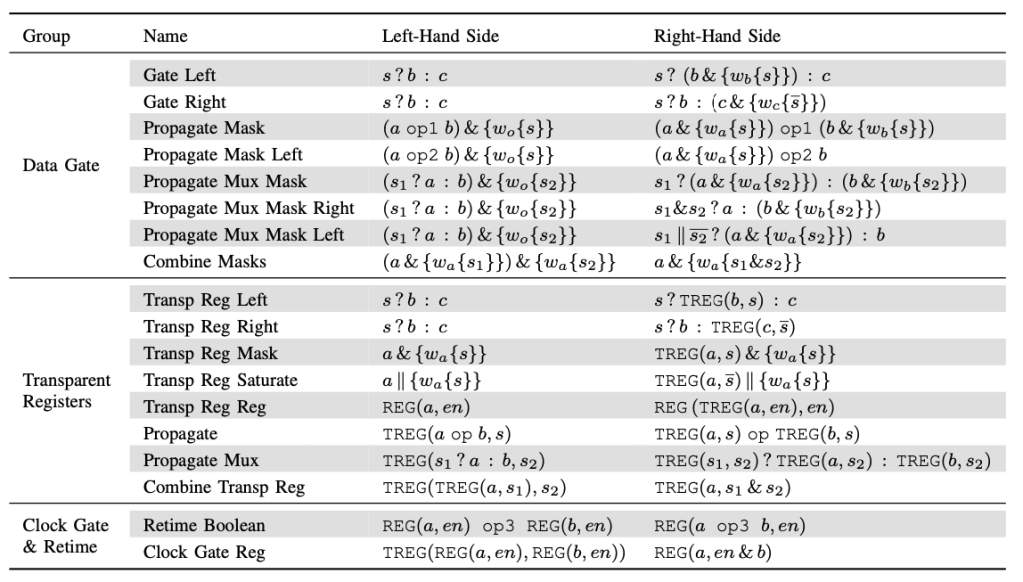

In our ARITH 2024 paper, Sam has shown how data gating and clock gating can be expressed as rewrites over streams of Boolean data types, lifting our previous work that looks at equivalences between bit vectors, to equivalences over streams of bit vectors. In this way, he’s able to express both traditional arithmetic equivalences like and equivalences expressing clock and data gating within the same rewriting framework. A collection of these latter equivalences are shown in the table below from our paper.

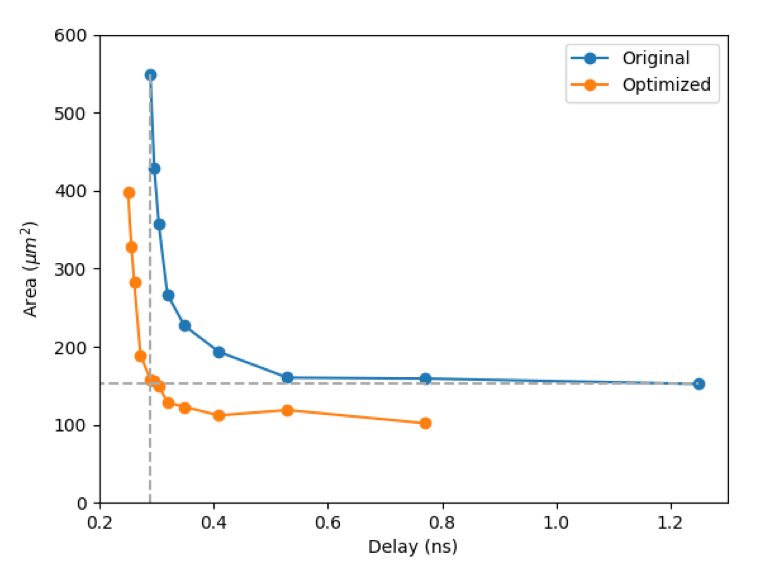

Sam has been able to show that by combining the rewrites creatively, using arithmetic rewrites to expose new opportunity for gating, our tool ROVER is able to save some 15% to 30% of power consumption over a range of benchmark problems of industrial interest. Moreover, ROVER will automatically adjust the whole design to better suit different switching profiles, knowing that rarely-switching circuit components are less problematic for energy, and prioritising exposing rewrites where they are needed.

I think this is really interesting work, and shows just how general the e-graph approach to circuit optimisation can be. If you’re going to ARITH 2024, do make sure to talk to Sam and find out more. If not, make sure to read his paper!