Ever since primary (elementary) school, we’ve known that multiplying an integer by 10 is easy. No need for the long written calculations we churn through when doing multiplication by hand. Just stick an extra zero on the end, and we’re done. Multiplication is (relatively) hard, concatenation of digits is easy. And yet, in this case, they’re equivalent in terms of the operation they perform.

Similar equivalences abound in the design of digital hardware for arithmetic computation, and my PhD student Sam Coward (jointly supervised by Theo Drane from Intel) has been devising ways to automatically take advantage of such equivalences to make Intel hardware smaller and more efficient. He will be presenting our work on this topic at the main computer arithmetic conference, ARITH, next week. The conference will be online, and registration is free: https://arith2022.arithsymposium.org.

Let’s explore this example from our early school years a bit more. I’ll use Verilog notation to denote a function taking two bit vectors and concatenating them together. Of course the ‘multiplication by 10 is easy’ becomes ‘multiplication by 2 is easy’ in binary. Putting this together, we can write

, meaning that multiplication by two is the same as concatenation with a zero. But what does ‘the same as’ actually mean here? Clearly they are not the same expression syntactically and one is cheap to compute whereas one is expensive. What we mean is that no matter which value of

I choose, the value computed on the left hand side is the same as the value computed on the right hand side. This is why I’ve chosen to write

rather than

.

clearly defines a relation on the set of expressions. This is a special kind of relation called a congruence: it’s an equivalence relation, i.e. it is symmetric, transitive, and reflexive, but it also ‘plays well’ with function application: if

then it necessarily follows that

for every function symbol

. Like any equivalence relation on a set,

partitions the set into a set of equivalence classes: in our setting a class corresponds to expressions that can be freely interchanged without changing the functionality of our hardware, even if it changes the performance, area or energy consumption of the resulting design.

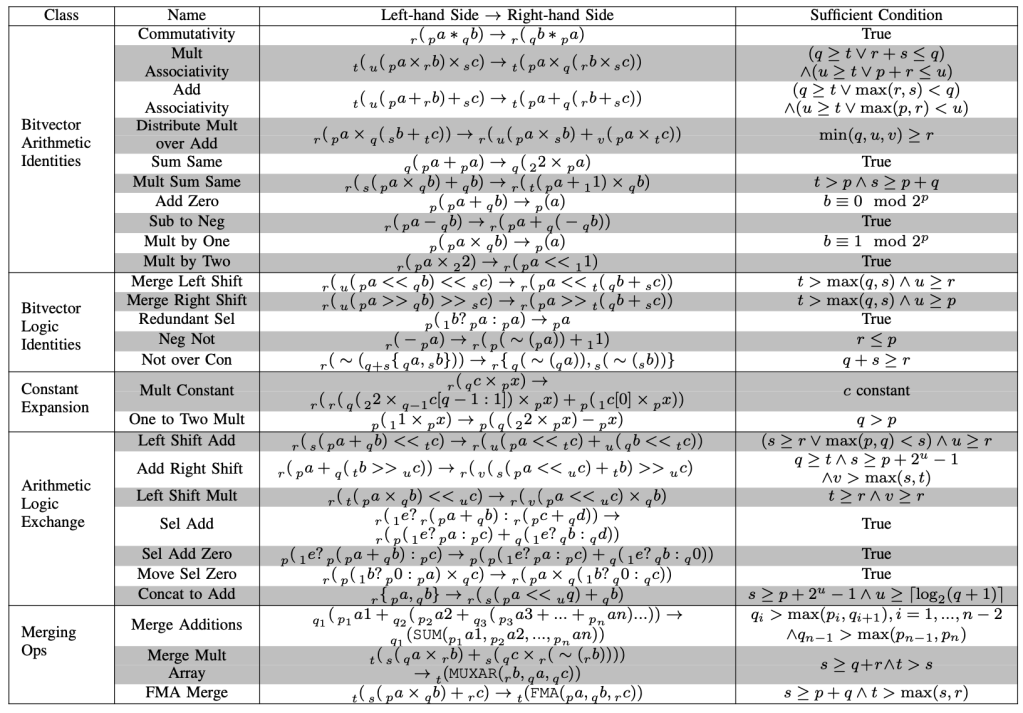

Our colleagues Willsey, Nandi, Wang, Flat, Tatlock and Panchekha recently published egg, a wonderful open source library for building and exploring data structures known as ‘e-graphs’, specifically designed to capture these relations on expressions. Sam, Theo and I have developed a set of ‘rewrites’ capturing some of the important intuition that Intel designers apply manually, and encoded these for use within egg. To give you a flavour of these rewrites, here’s the table from Sam’s paper; you can see the example we started with is hiding in there by the name ‘Mult by Two’. The subscripts are used to indicate how many digits we’re dealing with; not all these rules are true for arbitrarily-sized integers, and Sam has gone to some lengths to discover simple rules – listed here as ‘sufficient condition’ – for when they can be applied. This is really important in hardware, where we can use as few or as many bits as the job requires.

You can imagine that, when you have this many equivalences, they all interact and you can very quickly build up a very large set of possible equivalent expressions. e-graphs help us to compactly represent this large set.

Once our tool has spent enough time building such a representation of equivalences, we need to extract an efficient implementation as a hardware implementation. This is actually a hard problem itself, because common subexpressions change the hardware cost. For example if I’m calculating then I wouldn’t bother to calculate

twice. We describe in our paper how we address this problem via an optimisation formulation. Our tool solves this optimisation and produces synthesisable Verilog code for the resulting circuit.

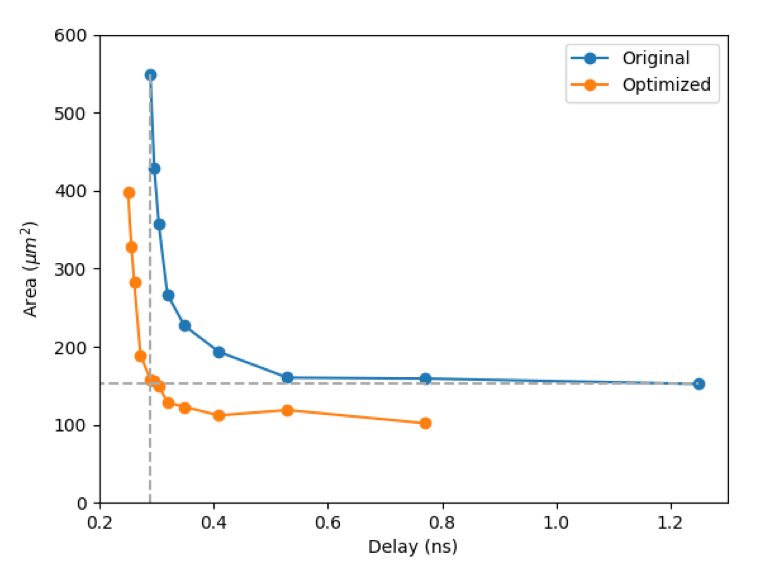

So, does it generate good circuits? It certainly does! The graph below shows the possible circuit area and performance achievable before (blue) and after (orange) the application of our tool flow before standard logic synthesis tools. For this example, silicon area can be reduced by up to around 70% – a very significant saving.

I’ve really enjoyed working on this topic with Sam and Theo. Lots more exciting content to follow. In the meantime, please tune in to hear Sam talk about it next week.