On the 16th May, my PhD student Jianyi Cheng (jointly advised with John Wickerson) will present his most recent paper “Dynamic C-Slow Pipelining for HLS” at FCCM 2022 in New York, the FPGA community’s first in-person conference since the pandemic hit.

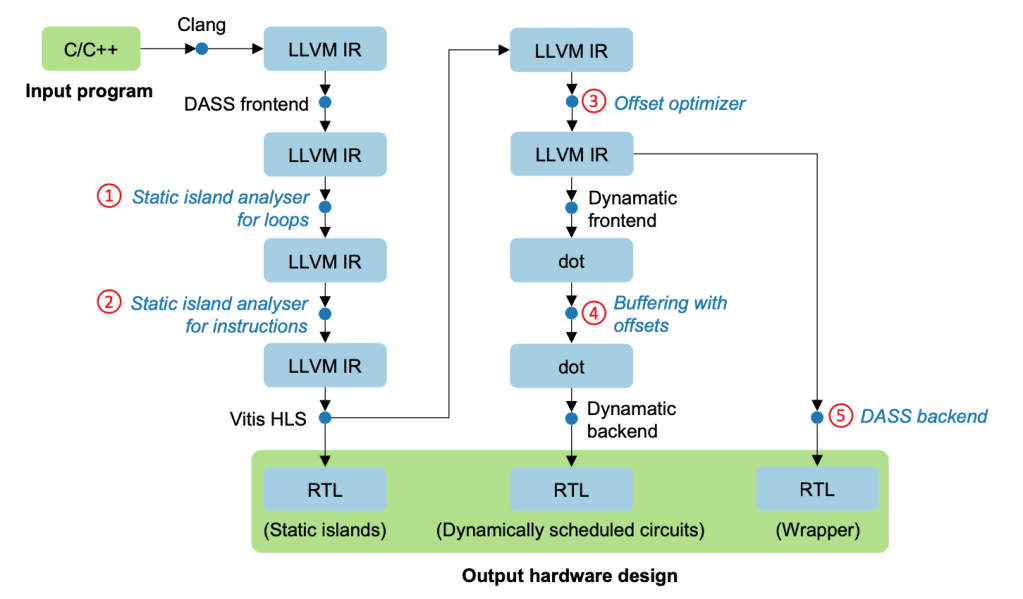

Readers of this blog may remember that Jianyi has been working on high-level synthesis techniques that combine the best of dynamic scheduling with the best of static scheduling [FPGA 2020,FCCM 2021]. The general principle underlying his work is to make the most of what information we have at compile time to develop highly efficient custom architectures, while leaving what we don’t know at compile time to influence execution at run-time.

A very common design pattern in hardware acceleration is the idea of C-slow pipelining. Pipelining tends to be taught early in undergraduate programmes, but C-slow pipelining rarely gets mentioned. The idea arises in circuits with feedback loops. The basic approach to pipelining doesn’t really work in this setting: although we can throw multiple registers into the circuit, potentially improving clock frequency at the cost of latency, just like with feed-forward circuits, we can’t then overlap computation to achieve improved throughput, unlike the feed-forward case, because of the data dependency corresponding to the feedback loop.

C-slow pipelining essentially says “OK, but you can use the spare capacity induced by the pipeline registers to overlap computation of independent streams of data, if you happen to have them available.”

Our new paper introduces a dynamic HLS flow for C-slow pipelining. This is particularly valuable in the context of a globally dynamic environment but where certain components exhibit static control flow and can be efficiently pipelined, for example some deep but predictable computation that must be repeated many times but with the arrival times and sources for this computation may change dynamically at runtime, a perfect fit for our prior work.

Jianyi presents a way to leverage the Boogie language and tool flow from Microsoft Research to automatically prove sufficient conditions for C-slowing to be correct. He is then able to introduce a new hardware component within the Dynamatic HLS tool that allows the schedule to “run ahead” to implement certain bounded out-of-order executions corresponding to C-slowing at the circuit level.

At the cost of a small area overhead in the region of 10%, this combined software analysis and hardware transformation is able to reduce wall-clock execution time by more than half compared to the vanilla dynamic scheduling approach.

If you’ll be in NYC in mid-May, go along and hear Jianyi’s talk!