I have just returned from a wonderful trip to California with colleagues and students, into which I managed to pack: visiting AMD for a day of presentations to each other, co-running a workshop on Spatial Machine Learning, attending the ACM FPGA 2024 conference at which my student Martin Langhammer presented, and catching up with international colleagues and with Imperial College alumni and their families. In this blog post I’ll briefly summarise some of the work-related key takeaways from this trip for me.

AMD and Imperial

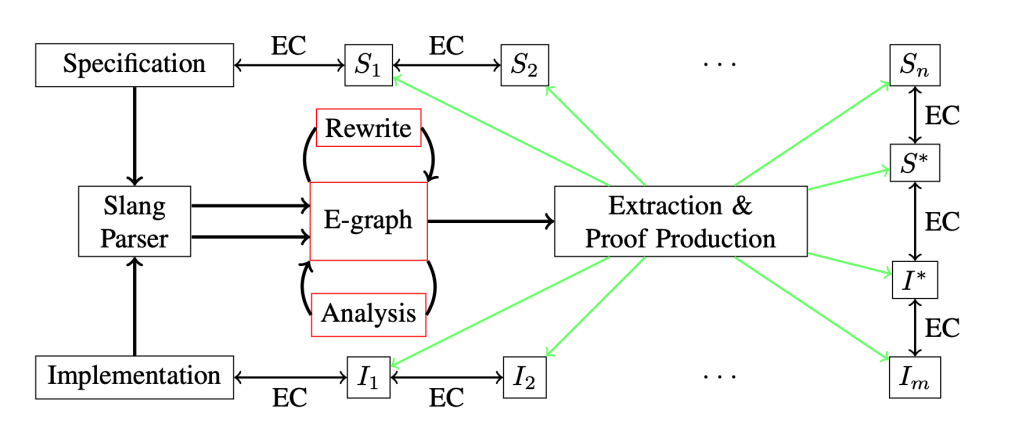

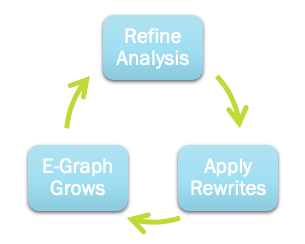

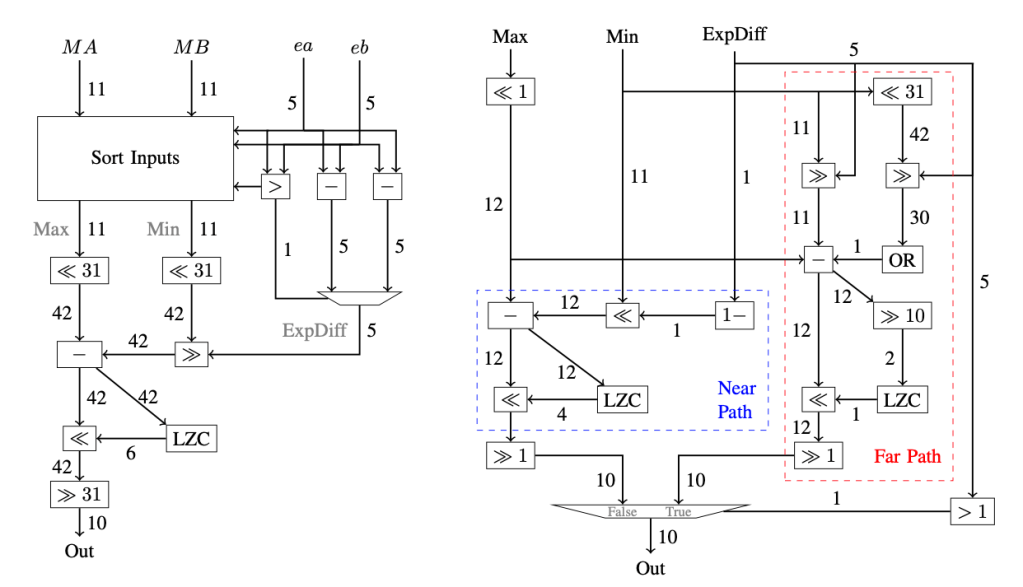

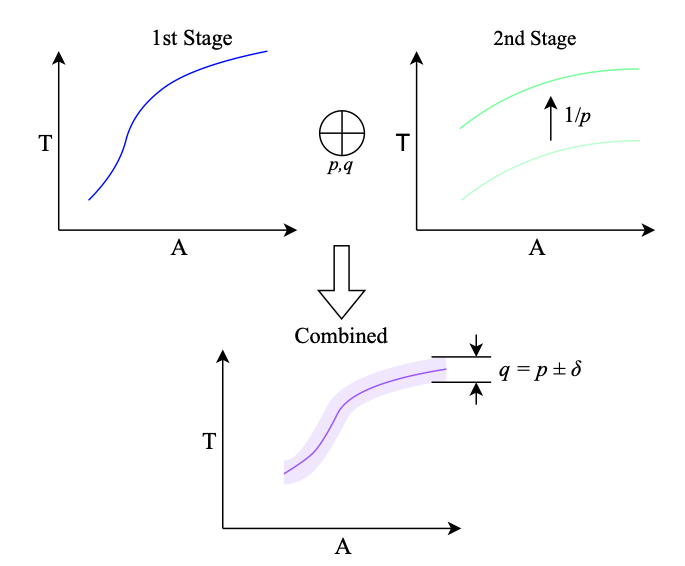

At AMD’s Xilinx campus, I was able to share some of our most recent work. I chose to focus on two aspects: the work we’ve done on e-graphs as an EDA data-structure, and how we have developed these for datapath optimisation, and the development of new highly-efficient LUT-based neural networks. It was great to catch up with AMD on the latest AI Engines developments, especially given the imminent open-sourcing of a complete design flow here. My former PhD students Sam Bayliss and Erwei Wang have been working hard on this – amongst many others, of course – and it was great to get a detailed insight into their work at AMD.

SpatialML Workshop

Readers of this blog may know that I currently lead an international centre on spatial machine learning (http://spatialml.net). This year we ran an open workshop, sharing the work we have been doing in the centre, and bringing in additional colleagues from outside the centre too. This was exceptionally well attended: we had delegates from academia and industry internationally in attendance: A*STAR, AMD, Ciena, Cornell, DRW, Essen, Groq, Imperial College, Intel, KAUST, Mangoboost, Minnesota, Rhode Island, Seoul, Simon Fraser, Southampton, Tsinghua, Toronto, and UCLA. We structured the day around a number of excellent academic talks from Zhiru Zhang (Cornell), Vaughn Betz (University of Toronto), Paul Chow (University of Toronto), Lei Xun (University of Southampton), Atefeh Sohrabizadeh (UCLA), and Alex Montgomerie (Imperial College), combined with industrial keynotes from Satnam Singh (Groq) and Sam Bayliss (AMD), closing with a panel discussion on the topic of working across abstraction layers; we had Jason Anderson (U of T), Jason Cong (UCLA), Steve Neuendorffer (AMD) and Wayne Luk (Imperial College) on the panel, with me chairing (sadly Theo Drane from Intel could not make it due to poor weather conditions in Northern California). Slides that are publicly sharable will be made available on the workshop website.

We learned about the use of machine learning (graph representation learning, GNNs) in EDA flows, about scaling LLM implementations across multiple devices in industry and academia, about dynamic DNNs and resource scaling, about programming models and flows for deep learning acceleration in both academia and industry (often using MLIR), and about FPGA architectural enhancements to support more efficient deep learning.

We received very positive feedback from workshop attendees. I would like to express particular thanks to my workshop co-organisers, especially the astoundingly productive Andrew Boutros of the University of Toronto, for all his hard work making this workshop happen.

FPGA 2024

I consider the ACM FPGA conference as my “home” conference: I’m a steering committee member and former chair, but this year my only roles were as a member of the best paper committee and as a session chair, so I could generally sit back and enjoy listening to the high quality talks and interacting with the other delegates. This year Andrew Putnam from Microsoft was technical program chair and Zhiru Zhang from Cornell was general chair. There is of course too much presented in a conference to try to summarise it all, but here are some highlights for me.

- Alireza Khataei and Kia Bazargan had a nice paper, “CompressedLUT: An Open Source Tool for Lossless Compression of Lookup Tables for Function Evaluation and Beyond”, on lossless compression of large lookup tables for function evaluation.

- Ayatallah Elakhras, Andrea Guerrieri, Lana Josipović, and Paolo Ienne have done some great work, “Survival of the Fastest: Enabling More Out-of-Order Execution in Dataflow Circuits”, on enabling (and localising) out-of-order execution in dynamic high-level synthesis, extending the reach of out-of-order execution beyond the approach I took with Jianyi Cheng and John Wickerson.

- Louis-Nöel Pouchet, Emily Tucker and coauthors have developed a specialised approach to checking equivalence of two HLS programs, “Formal Verification of Source-to-Source Transformations for HLS”, for the case where there are no dynamic control decisions (common in today’s HLS code), based on symbolic execution, rewriting, and syntactic equivalence testing. It basically does what KLEE does for corner cases of particular interest to HLS, but much faster. Their paper won the best paper award at FPGA 2024.

- Jiahui Xu and Lana Josipović had a nice paper, “Suppressing Spurious Dynamism of Dataflow Circuits via Latency and Occupancy Balancing”, allowing for a more smooth tradeoff between dynamic and static execution in high-level synthesis than was possible from the early work my PhD student Jianyi Cheng was able to achieve on this topic. They get this through balancing paths for latency and token occupancy in a hardware-efficient way.

- Daniel Gerlinghoff and coauthors had a paper, “Table-Lookup MAC: Scalable Processing of Quantised Neural Networks in FPGA Soft Logic”, building on our LUTNet work and extensions thereof, introducing various approaches to scale down the logic utilisation via sequentialisation over some tensor dimensions and over bits in the linear (LogicNets) case.

- My PhD student Martin Langhammer (also with Altera) presented his work, “A Statically and Dynamically Scalable Soft GPGPU”, on a super high clock frequency soft GPU for embedding into FPGA designs.

Reflections

I’ve come away with many ideas from all three events: the AMD visit, the workshop, and the FPGA conference. In person attendance at conferences is still the best for this; I didn’t get nearly as many ideas when attending FPGA 2021 or 2022 remotely. It was also particularly satisfying to see our work on soft-logic efficient deep neural networks (starting with LUTNet, most recently PolyLUT) being cited by so many people at the conference; this work appears to have really made a long-term impact.

Finally, it is always a joy to visit Monterey. This year FPGA was held at a hotel on Cannery Row, described by John Steinbeck as “a poem, a stink, a grating noise, a quality of light, a tone, a habit, a nostalgia, a dream”.