Readers of this blog will know that I have been critical of the Government’s assessment system for the new National Curriculum in England [1,2,3]. I therefore greet the Secretary of State’s recently launched consultation over the future of primary assessment with a cautious welcome, especially since it seems to follow well from the NAHT’s report on the topic.

What is Statutory Assessment for?

The consultation document states the aim of statutory assessment as follows:

Statutory assessment at primary school is about measuring school performance, holding schools to account for the work they do with their pupils and identifying where pupils require more support, so that this can be provided. Primary assessment should not be about putting pressure on children.

Firstly, let me lay my cards on the table: I do think that school “performance” deserves to be measured. My experiences with various schools suggests strongly that there are under-performing schools, which are in need of additional support to develop their educational practice. There is a subtle but telling difference between these perspectives, my own emphasising support while the Government’s emphasising accountability. While some notions of accountability in schools appear uncontroversial, the term has recently become associated with high-stakes educational disruption rather than with improving outcomes for our children. We can definitely agree that primary assessment should not be about putting pressure on children; unfortunately, I don’t believe that the consultation proposals seriously address this question.

Consultation Questions

In this section, I focus on the questions in the Government’s consultation on which I have a strong opinion; these are by no means the only important questions.

Q2. The EYFSP currently provides an assessment as to whether a child is ‘emerging, expecting [sic] or exceeding’ the level of development in each ELG. Is this categorisation the right approach? Is it the right approach for children with SEND?

Clearly the answer here primarily depends on the use of these data. If the aim is to answer questions like “how well-aligned – on average – are children with the age-related expectations of the early-years curriculum at this school?” then this assessment scheme is perfectly reasonable. Nor does it need to be tuned for children with SEND who may have unusual profiles, because it’s not about individual pupils, nor indeed for high attaining children who may be accessing later years of the national curriculum during their reception years. But if it’s about understanding an individual learning profile, for example in order to judge pupil progress made later in the school, then any emerging / expected / exceeding judgement seems far too coarse. It groups together children who are “nearly expected” with those well below, and children who are “just above expected” with those working in line with the national curriculum objectives for half way up the primary school – or beyond.

Q3. What steps could we take to reduce the workload and time burden on those involved in administering the EYFSP?

Teacher workload is clearly a key issue. But if we are talking seriously about how to control the additional workload placed on teachers by statutory assessment, then this is an indication that our education system is in the wrong place: there should always be next to no additional workload! Assessment should be about driving learning – if it’s not doing that, it shouldn’t be happening; if it is doing that, then it should be happening anyway! So the key question we should be answering is: why has the statutory framework drifted so far from the need to support pupils’ learning, and how can we fix this?

Q5. Any form of progress measure requires a starting point. Do you agree that it is best to move to a baseline assessment in reception to cover the time a child is in primary school (reception to key stage 2)? If you agree, then please tell us what you think the key characteristics of a baseline assessment in reception should be. If you do not agree, then please explain why.

[… but earlier …]

For the data to be considered robust as a baseline for a progress measure, the assessment needs to be a reliable indicator of pupils’ attainment and strongly correlate with their attainment in statutory key stage 2 assessments in English reading, writing and mathematics.

I agree wholeheartedly with the statement regarding the requirements for a solid baseline progress measure. And yet we are being offered up the possibility of baselines based on the start of EYFS. There is no existing data on whether any such assessment strongly correlates with KS2 results (and there are good reasons to doubt it). If the government intends to move the progress baseline from KS1 down the school, then a good starting point for analysis would be the end of EYFS – we should already have data on this, although from the previous (points-based) EYFS profile. So how good is the correlation of end-of-EYFS and KS2? Because any shift earlier is likely to be worse, so at least this would provide us with a bound on the quality of any such metric. Why have these data not been presented?

It would, in my view, be unacceptable to even propose to shift the baseline assessment point earlier without having collected the data for long enough to understand how on-entry assessment correlates with KS2 results, i.e. no change should be proposed for another 6 years or so, even if statutory baseline assessments are introduced now. Otherwise we run the risk of meaningless progress metrics, with confidence intervals so wide that no rigorous statistical interpretation is possible.

Q9. If a baseline assessment is introduced in reception, in the longer term, would you favour removing the statutory requirement for all-through primary schools to administer assessments at the end of key stage 1?

The language is telling here: “to administer assessments.” If this were phrased as “to administer tests,” then I would be very happy to say “yes!” But teachers should be assessing – not examining – pupils all the time, in one form or another, because assessment is a fundamental part of learning. So really the question is the form of these assessments, and how often they should be passed up beyond the school for national comparison. Here the issue is more about the framework of support in which a school finds itself. If a school is “left to its own devices” with no local authority or other support for years (a common predicament at the moment with the abolition of the Education Services Grant by the Government!) then it way well be too long to wait six-and-a-half years before finding out that a school is seriously under-performing. Yet if the school exists within a network of supportive professionals from other schools and local authorities who have the time and resource to dig deeply into the school’s internal assessment schemes during the intervening years, these disasters should never happen. A prerequisite for a good education system is to resource it appropriately!

Q11. Do you think that the department should remove the statutory obligation to carry out teacher assessment in English reading and mathematics at key stage 2, when only test data is used in performance measures?

I think this is the wrong way round. Schools should only be required to report teacher assessment (and it should be “best fit”, not “secure fit”); tests at Key Stage 2 should be abolished. This would be fully consistent with high quality professional-led, moderated assessment, and address the very real stress placed on both children and teachers by high-stakes testing schemes. Remember the consultation document itself states “Primary assessment should not be about putting pressure on children.”

Q14. How can we ensure that the multiplication tables check is implemented in a way that balances burdens on schools with benefit to pupils?

By not having one. This is yet another situation where a tiny sliver of a curriculum (in this case tedious rote learning of multiplication tables) is picked out and elevated above other equally important elements of the curriculum. Boaler has plenty to say on this topic.

Q15. Are there additional ways, in the context of the proposed statutory assessments, that the administration of statutory assessments in primary schools could be improved to reduce burdens?

The best way to reduce the burden on schools seems to be to more closely align formative and summative assessment processes. However, schools have been explicitly encouraged to “do their own thing” when it comes to formative assessment processes. The best way the Government could help here is by commissioning an expert panel to help learn from the best of these experiments, combining what has been learnt with the best international educational research on the topic, and re-introducing a harmonised form of national in-school assessment in the primary sector.

Best Fit or Secure Fit?

The consultation appears to repeat the Government’s support for the “secure fit” approach to assessment. The document states:

The interim teacher assessment frameworks were designed to assess whether pupils have a firm grounding in the national curriculum by requiring teachers to demonstrate that pupils can meet every ‘pupil can’ statement. This approach aims to achieve greater consistency in the judgements made by teachers and to avoid pupils moving on in their education with significant and limiting gaps in their knowledge and skills, a problem identified under the previous system of national curriculum levels.

The key word here is every. This approach has been one of the key differentiators from the previous national curriculum assessment approach. I have argued before against this approach, and I stand by that argument; moreover, there are good statistical arguments that the claim to greater consistency is questionable. We are currently in the profoundly odd situation where teacher assessments are made by this “secure fit” approach, while tests are more attuned with a “best fit” approach, referred to as “compensatory” in previous DfE missives on this topic.

However, the consultation then goes on to actually suggest a move back to “best fit” for writing assessments. By removing the requirement for teacher assessments except in English, and relying on testing in KS2 for maths and reading, I expect this to be a “victory for both sides” fudge – secure fit remains in theory, but is not used in any assessment used within the school “accountability framework”.

High Learning Potential

The consultation notes that plans for the assessment of children working below expectation in the national curriculum are considered separately, following the result of the Rochford Review. It is sad, though not unexpected, that once again no particular mention is given to the assessment of children working well above the expectation of the national curriculum. This group of high attaining children has become invisible to statutory assessment, which bodes ill for English education. In my view, any statutory assessment scheme must find ways to avoid capping attainment metrics. This discussion is completely absent from the consultation document.

Arithmetic or Mathematics?

Finally, it is remarkable that the consultation document – perhaps flippantly – describes the national curriculum as having been reformed “to give every child the best chance to master reading, writing and arithmetic,” reinforcing the over-emphasis of arithmetic over other important topics still hanging on in the mathematics primary curriculum. It is worth flagging that these changes of emphasis are distressing to those of us who genuinely love mathematics.

Conclusion

I am pleased that the Government appears to be back-tracking over some of the more harmful changes introduced to primary assessment in the last few years. However, certain key requirements remain outstanding:

- No cap on attainment

- Baselines for progress measures to be based on good predictors for KS2 attainment

- Replace high-stress testing on a particular day with teacher assessment

- Alignment of summative and formative assessment and a national framework for assessment

- Well-resourced local networks of support between schools for support and moderation

As I have said on PHP

I am not interested in understanding SEN policy when not concerned with gifted children (I have other things to do, there are others more qualified and it doesn’t apply to my child). But I hate the cap on attainment, hate the way assessments are graded (which does not give you as a parent enough information to guide and assist your child). Also I think that more needs to be done for HLP children in the way of support and stimulation and allowing them to progress at the speed they are capable of if they choose and are supported emotionally, instead of forcing them in with age group based “peers”.

Basically take off the brakes on these kids as the current system is like making them drive with the handbrake on.

I have given a more detailed response to the consultation itself inculding the reccomendation that all children be independantly screened for HLP prior to entry into the school system.

LikeLike

Michael Tidd @MichaelT1979, always has interesting things to say about assessment. He has recently written this article in the TES: https://www.tes.com/news/school-news/breaking-views/predicting-progress-its-very-height-ridiculousness. Since the TES website doesn’t seem to allow commenting, I will pick up some of the points he makes here, since they relate to primary assessment, even if not directly to the DfE consultation.

1. Michael makes the very important point that attainment (and therefore, indirectly, progress) is susceptible to inherent variations across pupils. He compares to height, and points out that centiles are used here. Quite right – more should be done to utilise the distribution of children’s attainment when coming to judgements on the progress made both by a child (very susceptible to large variations) and the average for a cohort (less susceptible to large variations). To be fair, this information is present in reports like RAISEOnline, though school leaders, LAs and some inspectors don’t always look at the detail. But just because something is susceptible to variation doesn’t devalue its measurement. Consider the final statement Michael makes at the end of the article: “Six points of progress each year – or three, or 10 for that matter – is no more useful an indicator of children’s success than expecting to grow exactly 5cm between birthdays. We’d do well to stop pretending otherwise.” Just because we don’t expect all children to grow the same amount doesn’t mean we shouldn’t measure their height. Because if they stop growing, or grow in a very unusual way, they are usually referred to a paediatrician. Not because there is something wrong necessarily, but because we need to ask why and to understand their unusual growth. The same is true with progress.

2. Michael suggests that it would be foolish to try to predict a child’s age from an example of their work. He states that “you might estimate what year group they were in, absolutely – probably based as much on your knowledge of the curriculum as anything, but nobody would sensibly try to estimate their age down to the nearest couple of months, surely?” There are two quite distinct points to make here. Firstly, yes it is entirely possible to predict a child’s age from their work – I’m not a primary school teacher yet I can tell you whether a given book is more likely to be from a Year 1 or a Year 6 – though of course there is a good level of uncertainty associated with such an estimate, and I’m very happy to accept the assertion that the level of uncertainty is much greater than a couple of months. This is uncontroversial. However, the statement “based as much on your knowledge of the curriculum as anything” rings alarm bells to me. It suggests that *despite* all the variation in attainment quite correctly pointed to by Michael, walking into a school today we are likely to see children taught material based on their age, not their capability. This is sad, in my view, especially since the National Curriculum programmes of study quite clearly allow schools to take the variation into account: “Within each key stage, schools therefore have the flexibility to introduce content earlier or later than set out in the programme of study. In addition, schools can introduce key stage content during an earlier key stage, if appropriate.”

3. Michael states that “Somehow, it has become acceptable to consider progress through the academic year as a series of six steps, as though every two months will see an identical change in attainment for everyone.” Again there are a few quite distinct issues here. The first one is whether it is reasonable to measure progress as “a series of steps.” The second is whether all children will exhibit the same number of steps of progress over a given period of time. The final point is whether six is indeed “the magic number” – or three – or any other number. The second point is easiest to deal with – of course not all children progress at the same rate or in a linear fashion – any assessment scheme expecting this is as ridiculous as Michael suggests. Yet that doesn’t stop us drawing a best fit line through rates of progress and asking questions about why particular children are making more or less progress than their peers. Maybe there’s no reason, maybe there is some magic happening we could all learn from. You don’t know until you look. The first and third points depend on what a step means. At one extreme – and I think this is what Michael is complaining about – stepped progress means, for example, “can’t compare and order fractions”, “can compare and order fractions a tiny bit”, “can compare and order fractions a bit”, “can compare and order fractions reasonably”, “can compare and order fractions a quite well”, “can compare and order fractions very well”, “a master at comparing and ordering fractions” (though he makes the point with writing.) Of course this is ridiculous. Yet it’s not the way I’ve seen schools successfully use a three or six-point progress summary – schools I’ve talked to seem to use them to record *the proportion* of different statements in the national curriculum that have been successfully mastered by pupils rather than how well they’ve mastered an individual statement. With this in mind it certainly seems reasonable to assess as “having met about a third of the statements for Year 5”.

So, in summary, a thought provoking article from Michael Tidd, as always. But I see no reason to abandon stepped progress measures. Indeed I don’t see any suggestion for an alternative that is anything other than a stepped progress measure in disguise. Is there?

LikeLike

interesting comments, thank you George. A few things (aside from the fact that it is, of course, an article intended to stoke views rather than provide a clear assessment framework!):

1. I don’t think we disagree on the importance of measuring. I’m in favour of decent testing as a way of getting an indication of attainment/progress. My point in the article is that we shouldn’t be attempting to interpret measures taken so frequently. If we insist on making a judgement every 6 weeks (which is what is implied by the 6-step model), then we are likely to be confusing noise for meaningful information. Just as we wouldn’t panic if a child hadn’t grown the expected 0.4cm in two months (or whatever it might be), so it would be useless to draw any great inference from a single steps movement up/down (or not at all) over such a short period.

2. Yes, as I say, identifying the age to the nearest year could give a reasonable estimate, but to within a couple of months would be crazy – yet that is what the 6-step model implies.

As for the impact of curriculum, while it’s true that children have different prior attainments, they are nevertheless in classes of 30+. In my Y6 class I have children who might be writing at a level you might expect of a Y3, yet they may also have had a go at using dashes because it was covered in a lesson along with their peers. That might be the clue that gives away their age unrelated to their apparent ability otherwise.

3. You’re mistaken about my interpretation about 6 steps. I’m not referring to “can do X a tiny bit”, although I do see systems like that (with more than 6 steps sometimes!). It’s more about the interpretations being drawn from systems like the one you suggest based on percentages of objectives met. If a child has met 17% of objectives that they are therefore “on track” for October, but if they’ve met 16% of them, then they are “one step” behind. Consider further if the child on 17% can use Roman numerals, but not place value. They appear to be on-track yet have a significant gap, while the other child who is behind may have a secure understanding of this key concept. This is of course nonsense, and is the result of artificial thresholds. It would be the equivalent of saying that a 8 year old child who is 2cm “too short” is a cause for concern.

You seem quite happy with the 3/6 steps model (or perhaps only with the 3-step idea based on your final comment), but what does it add to our understanding? I don’t see that it’s useful, and it certainly isn’t a measure – it’s a category. Categories are sometimes useful for comparing whole cohorts on occasion, but every 6 weeks?

LikeLiked by 1 person

Thanks for the reply and clarification, Michael. As you say, I think we agree on a lot. Some further thoughts:

1. Frequency of measurement. Let’s consider what drives this. Firstly, even with perfect information, it’s not reasonable to measure more frequently than we can make use of the information (which is probably the initial motivation for half-termly?) Secondly, if we spend all our time collating summary metrics and not teaching, that does nobody any benefit. But the third point is the key one in this discussion: how does uncertainty impact on frequency? The less average movement within a given time period, the less frequently we should measure, for a fixed variability of the data. On this, I am happy that your expertise suggests that variation for an individual child is so large that half-termly measurement is (relatively) meaningless. Perhaps even termly – though if it’s about early detection of potential issues rather than “performance management” or some other summative use of data – this is probably arguable. However if we’re averaging over a cohort to get cohort metrics, the variation decreases. So half-termly for a class or a year group might well be meaningful, even if termly for an individual pupil is only borderline.

2. Your first point about objective proportions is one relating to granularity of thresholds. I agree with this – if we collect data at the level of individual objectives, why then round that data to a very coarse level before assessing progress – use the full precision available to us: 16% versus 17% in this case; it’s like rounding a child’s height to the nearest 10cm before looking it up in the centile table. I take your point about a child 2cm “too short”, but if an 8 year old child is 15cm shorter than average then a referral actually is recommended (at least according to the BSPED https://www.bsped.org.uk/)!

3. Your final question “what does it add to our understanding” is key. I think primarily what it adds is the ability of school leaders and governors to quickly grasp which classes and groups of pupils might need extra investigation. As always, data is the start of a discussion about “why”, never the end point.

Thanks again.

LikeLike

Hi George – interesting discussion!

1. I think the issue about accuracy is key. If you fancy running a scorable test every term, then that seems fine to me. However, even with something so precise, as you say the individual level there are risks. At cohort level it then might become useful, although most primary cohorts are small – some very small – so such data again is hazardous (and bear in mind that we’re talking about largely untrained statistical eyes here!).More to the point, in fact most such judgements are a broad teacher assessment judgement (or worse, a calculated total of a number of teacher assessment judgements). I think it unwise to be trying to draw such regular inferences from such fuzzy information.

Perhaps more regular data on a specific task is useful, e.g. knowledge/recall of number bonds. Part of the problem here is that we’re often talking about broad judgements across a fairly large domain (e.g. Writing) and then trying to imagine a category system that shows a step of progress every 6 weeks. I don’t believe that any teacher could reasonably define the difference between an October average writer and a February average writer, let alone a December one.

That’s the bit I have trouble with. I collect near daily data on tables tests, but I don’t imagine that the child who scored 56 yesterday and 54 today has got worse any more than the reverse would suggest improvement. Perhaps my biggest concern is the sense of precision which doesn’t really exist. Which links to point 2

2. With height we can very accurately measure children, comfortably to the nearest 1/2cm, perhaps the nearest millimetre – but as you say, we would only be gravely concerned by a 15cm difference. Medical practitioners might choose to monitor someone who was 10cm shorter than expected more regularly. Even that height difference represents well over a year of typical growth.

We come back again to the cohort issue. Perhaps over large groups we might iron out some of the issues, but if we measured heights of two classes of children every 6 weeks, and one seemed to fall behind, at what point would we feel that there was enough statistical significance to warrant drawing any conclusions? We certainly wouldn’t base it on a single half-term’s data, I’m sure you’d agree?

3. Yes, this is the key. And perhaps my main point here is that by attempting to draw conclusions so regularly, we add to the likelihood of error. It would concern me if a governing body were drawing conclusions about the quality of teaching in any given class because in one class 45% of children had reached step 18, but in a parallel class only 40% had. That’s quite possibly 2 children, each lacking one concept. I would consider that to be well within the likely margin of error for such judgements. The level of precision we are able to attain at such small scale doesn’t merit the effort it might take, while the risks of collecting such data and sharing it with those who are not statistically-minded (such as the vast majority of those in education!) are far greater. Not only is it probably not useful, I’d argue that it could be positively damaging.

LikeLiked by 1 person

Interesting discussion indeed!

One issue you highlight is that these data are often interpreted by people without the statistical skills to understand the uncertainty and its implications. I agree. But I think there is no way to avoid uncertainty – it is inherent, even with testing. So really we should be upskilling our school leaders and our governors, in my view.

The other issue worth picking up relates to the statement “I don’t believe that any teacher could reasonably define the difference between an October average writer and a February average writer”. If we use the coverage metric, wouldn’t an October average writer be one with a small number of statements under his / her belt while the February writer would be one with about half the statements under his / her belt? This goes back to my initial point – I agree these are positions are likely to be indistinguishable with respect to individual statements, but surely not with respect to the proportion of such statements achieved? (I will post separately below on notions of mathematical ordering of curriculum coverage, which touches on this point but is probably a little off on a tangent!)

I of course agree with you about a governing body hypothetically drawing conclusions about quality of teaching. But I would suggest that a good set of governors would not be using a lower proportion in one class than another to draw conclusions over quality of teaching but rather as a basis for discussion with the subject coordinator, as a prompt to ask “why is the proportion lower in Class A?” To which, I’m sure, any subject coordinator worth their salt will begin by answering in the way you have!

To answer the specific question you ask: “if we measured heights of two classes of children every 6 weeks, and one seemed to fall behind, at what point would we feel that there was enough statistical significance to warrant drawing any conclusions?” The standard statistical answer would be: when the probability of any such difference happening purely due to chance (due to a random allocation of children to classes combined with the inherent variation in children’s growth) is smaller than some significance value – typically 5% or 1%. I don’t know what that height difference is, but it can be calculated, because we know the distributions. So rigorous statistics can be applied. I don’t see the educational setting as any different, except – as you point out – the variations will be higher (including the measurement error).

LikeLike

A mathematical interlude…

Michael’s comments have made me reflect a little further on questions of ordering of attainment, i.e. when can we say that one pupil has attained more than another? I’d like to explain this issue from an abstract mathematical perspective.

One option is not compare attainment at all, but generally this is the kind of summary information that is of great value to leaders, so let’s assume for the moment that we do want to devise such a way that we can talk about better or worse attainment. Let’s further assume that individual curriculum statements are indivisible: a pupil either “gets / can do” or “doesn’t get / can’t do” a particular concept / activity. To make things really simple, let’s also only consider ordering of attainment within a year group. Each year’s programme of studies contains a number of statements .

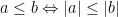

.

Each child’s attainment is actually a subset of these statements – those achieved. Clearly a property we would like to have between two attainments is that , that is if child b can do everything in the curriculum that child a can do then child b’s attainment is at least as high as child a’s. Let’s call these “meaningful orders”.

, that is if child b can do everything in the curriculum that child a can do then child b’s attainment is at least as high as child a’s. Let’s call these “meaningful orders”.

We could actually define and be done. Yet this leads to many incomparabilities which Michael alludes to. Is the child that can do

and be done. Yet this leads to many incomparabilities which Michael alludes to. Is the child that can do  and

and  higher or lower attaining than the child that can do

higher or lower attaining than the child that can do  ,

,  and

and  ? One option is to live with this. These children are genuinely incomparable in attainment, and that’s all there is to it. I think the intuitive adoption of such an ordering by set inclusion (https://en.wikipedia.org/wiki/Partially_ordered_set) is probably partly behind the rejection that an average February attainment is distinguishable from an average October attainment.

? One option is to live with this. These children are genuinely incomparable in attainment, and that’s all there is to it. I think the intuitive adoption of such an ordering by set inclusion (https://en.wikipedia.org/wiki/Partially_ordered_set) is probably partly behind the rejection that an average February attainment is distinguishable from an average October attainment.

But there are other meaningful orders that could be defined, such as ordering by cardinality: , which is effectively what I’ve argued for above and is in wide use in primary education. There are many, many, other choices of course. The advantage of this one is that it is a total order (https://en.wikipedia.org/wiki/Total_order), with all the nice mathematical properties that brings – properties heavily used throughout the primary education system. The disadvantage, as Michael implies, is that this is somewhat artificial.

, which is effectively what I’ve argued for above and is in wide use in primary education. There are many, many, other choices of course. The advantage of this one is that it is a total order (https://en.wikipedia.org/wiki/Total_order), with all the nice mathematical properties that brings – properties heavily used throughout the primary education system. The disadvantage, as Michael implies, is that this is somewhat artificial.

Take your pick: summary but artificial, or the inability to summarise but totally real. In practice, schools often choose the former for SLT and governors and the latter for classroom teachers. Perhaps with good reason!

LikeLike

Some very sensible observations from the education select committee today https://www.tes.com/news/school-news/breaking-news/primary-assessment-five-warnings-issued-mps-today

LikeLike